Section Branding

Header Content

AI is changing video games — and striking performers want their due

Primary Content

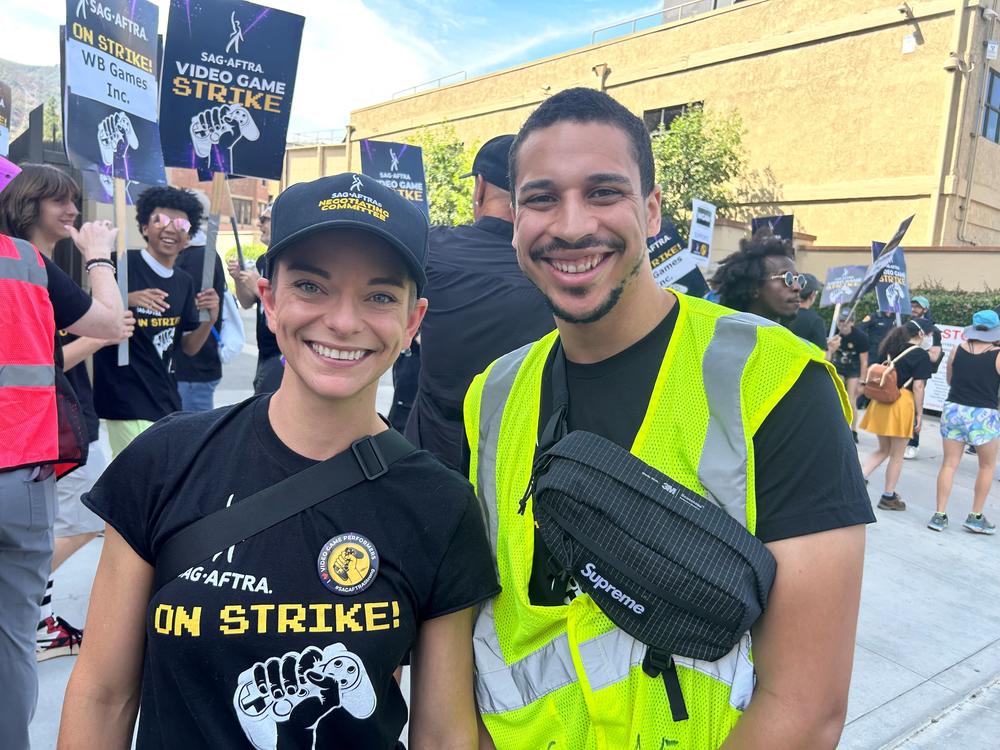

Jasiri Booker’s parkour and breaking movements are used to animate the title character in Marvel’s Spider-Man: Miles Morales video game.

“I stick to walls. I beat people up. I get beaten up constantly, get electrocuted and turn invisible,” the 26-year-old says.

He and other performers act out action sequences that make video games come to life.

But earlier this month, Booker picketed outside Warner Bros. Studios in Burbank, Calif., along with hundreds of other video game performers and members of the union SAG-AFTRA. They plan to picket again outside Disney Character Voices in Burbank on Thursday.

After 18 months of contract negotiations, they began their work stoppage in late July against video game companies such as Disney, WB Games, Microsoft’s Activision, and Electronic Arts. Members of the union have paused voice acting, stunts, and other work they do for video games.

The bargaining talks stalled over language about protections from the use of artificial intelligence in video game production. Booker says he’s not completely against the use of AI, but “we're saying at the very least, please inform us and allow us to consent to the performances that you are generating with our AI doubles.” He and other members of SAG-AFTRA are upset over the idea that video game companies could eventually replace him and now may see his very human stunts as simply digital reference points for animation.

In a statement, a spokesperson for the companies, Audrey Cooling, wrote, “Under our AI proposal, if we want to use a digital replica of an actor to generate a new performance of them in a game, we have to seek consent and pay them fairly for its use. These are robust protections, which are entirely consistent with or better than other entertainment industry agreements the union has signed.”

But video game doubles say those protections don’t extend to all of them – and that’s part of why they’re on strike.

Andi Norris, a performer on the union’s negotiating team, says that under the gaming companies’ proposal, performers whose body movements are captured for video games wouldn't be granted the same AI protections as those whose faces and voices are captured for games.

Norris says the companies are trying to get around paying the body movement performers at the same rate as others, “because essentially at that point they just consider us data.” She says, “I can crawl all over the floor and the walls as such-and-such creature, and they will argue that is not performance, and so that is not subject to their AI protections.”

It’s a nuanced distinction: the companies have included “performance capture” in their proposal, including recordings of voice and face performers, but not behind-the-scenes "motion capture" work from body doubles and other movement performers that are used to render motion.

But Norris and others like her consider themselves “performance capture artists” – “because if all you were capturing is motion, then why are you hiring a performer?”

How motion capture works

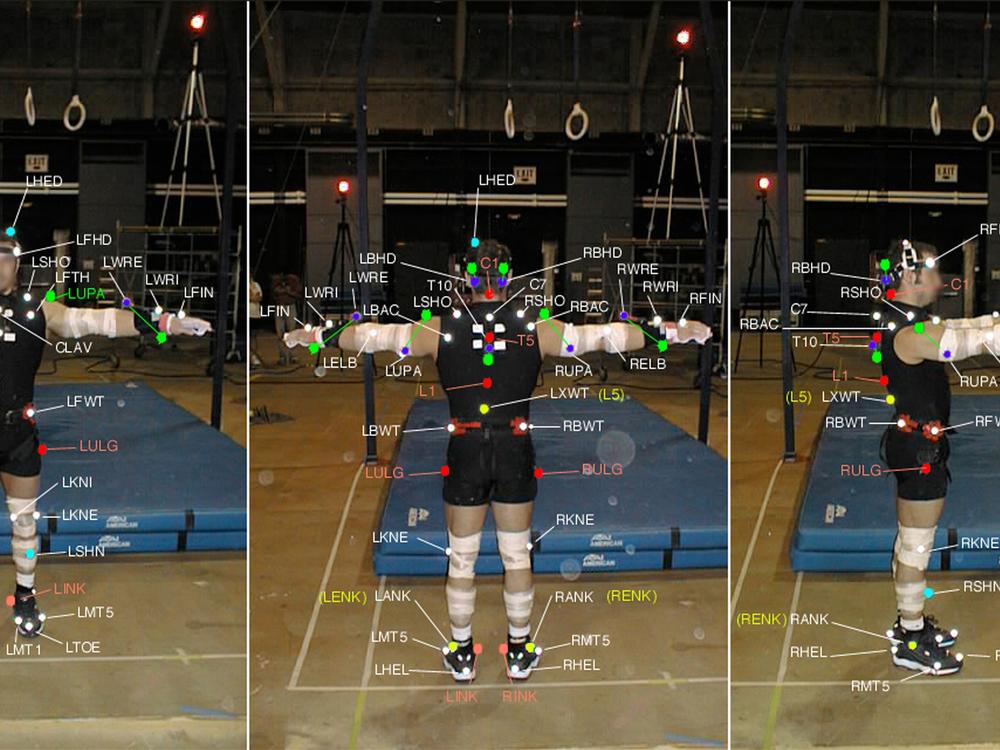

Another Spider-Man double, Seth Allyn Austin, says video game performance artists work in studio spaces known as “volumes,” surrounded by digital cameras. They wear full body suits – a bit like wetsuits – dotted with reflective sensors captured by cameras, “So the computer can have our skeletons and they can put whatever they want on us.”

Those digitized moving skeletons are fed into video software and then rendered into animated video game characters, says mechanical engineer Alberto Menache, cofounder of NPCx, which develops AI tools to capture human motion data for video games and movies. “Motion capture,” he says, “They call it mocap for short.”

Menache is a pioneer in the field, and has consulted or supervised the visual effects for films including The Polar Express, Spider-Man, Superman Returns, and Avatar: The Way of Water. He’s also worked at PDI (which became DreamWorks Animation before shuttering), Sony Pictures, Microsoft, Lucasfilm and Electronic Arts. (Electronic Arts along with Activision, owned by Microsoft, are both involved in negotiations with SAG-AFTRA and currently involved in the work stoppage.)

It takes an entire crew of digital artists, he says, to animate the motions created by human performers. “You need a modeler to build the character, then you need a person doing the texture mapping, as it's called, which is painting the body or painting the Spider-Man suit,” he says. “Then you need a rigger, which is the person that draws the skeleton, and then you need an animator to move the skeleton. And then you need someone to light the character.”

From hand-drawn animation to motion capture

During the silent picture era more than a century ago, hand-drawn animators began using live-action footage of humans. They created sequences by tracing over projected images, frame by frame – a time consuming process that became known as “rotoscoping.” Filmmaker Max Fleischer patented the first Rotoscope in 1915, creating short films by hand-drawing over hand-cranked footage of his brother as the character Koko the Clown. According to Fleischer Studios, one minute of film time initially required almost 2,500 individual drawings. Fleischer went on to animate Popeye the Sailor and Betty Boop this way, as well as characters in Gulliver’s Travels, Mr. Bug Goes to Town, Superman and his version of Snow White.

Later, Walt Disney animators used rotoscope techniques, beginning with the 1937 film Snow White and the Seven Dwarfs.

By the 1980s, animation techniques advanced with computer generated images. During the 1985 Super Bowl, viewers watched an innovative 30-second commercial made by visual effects pioneer Robert Abel and his team. To create the ad for the Canned Food Information Council, they painted dots onto a real woman performer as the basis for a “sexy” robot character that was then rendered on a computer.

Menache says similar technology had been used by the military to track aircraft, and in the medical field to diagnose conditions such as cerebral palsy. In the early 1990s, he innovated the technique by developing an animation software for an arcade video game called Soul Edge.

“It was a Japanese ninja fighting game. And they brought a ninja from Japan,” he says. “We put markers on the ninja and we only had a seven by seven foot area where he could act because we only had four cameras. So the ninja spent maybe two weeks doing motions inside that little square. It was amazing to see. And then it took us maybe a month to process all that data.”

Will human performers be needed in the future?

Besides Spider-Man, Seth Allyn Austin has portrayed heroes, villains and creatures in such games as The Last of Us, and Star Wars Jedi: Fallen Order. He says the technology has evolved even since he started a decade ago. He remembers wearing a suit with LED lights powered by a battery pack. “Whenever I did a flip, the battery pack would fly off,” he recalls. “I've had engineers have to try to solder the wires back on while I'm wearing the suit because it would save time. Luckily we've moved away from that technology.”

These days, he says, some new technologies allow performers to watch themselves performing on screen as fully animated characters in 3-D, reacting to animated settings and other characters.

“We can adjust our performance in real time to make it look even more creepy or cool or realistic or heroic,” he says. “That’s the thing with AI, the tool is pretty cool. The tool can help us a lot. But if the tool is used to replace us, then it’s not the tool, it’s who’s wielding it.”

Menache says replacing human performers for video games or films is unlikely any time soon.

“If you want it to look real, you can't animate,” he says. “There's a lot of very good animators, but their expertise is mostly for stylized motion. But real human motion: Some people get close, but the closer you get to that look, the weirder it looks. Your brain knows.”

He likens it to the phenomenon of the uncanny valley – as he describes it, “that one percent that is missing, that tells your brain something's wrong,” he says.

How AI is changing video game development

Menache is now developing AI technology that doesn’t require people to wear sensors or markers. “To train the AI, you need data from people,” he says. “We don't just grab people's motions, we get their permission.”

For example, he says he could hire and film team players from LA Galaxy, like he once did in the 1990s. Their moves could be stored to train the AI model to develop new soccer video games. “With our new system,” he says, “They won't even need to go to the studio… You just need footage. And the more angles, the better.”

Menache has also developed technology for face tracking and “de-aging” actors, and to create “deep fakes” where actors’ faces can be scanned and altered. All of this, he says, still requires the consent of human performers.

Even AI still needs humans to train the models, says Menache. “I built a system for face tracking, and I trained it with maybe 2,000 hours of footage of different faces. And now it doesn't need to be trained anymore. But a face is a lot less complex than a full body,” he says, adding that would need footage of “thousands and thousands of hours of people of different proportions.”

“Maybe you wouldn't need people to do that anymore,” he says, “but the people that were used to train it should get their piece of whatever this is useful. That's what the strike is all about. And I agree with that. We don't use any data that is not under permission from the performers.”

Editor’s note: Many NPR employees are members of SAG-AFTRA, but are under a different contract and are not on strike.